The energy industry is experiencing a long-overdue shift—one where AI is no longer a novelty but a necessity. And in the nuclear and utility sectors, we’re beginning to see something we welcome: more vendors entering the space.

It’s a positive sign. The growing interest from startups, enterprise AI labs, and newly formed nuclear-focused technology companies is a clear signal that the market is ready to modernize. Everyone—from operators to regulators—is looking for smarter, faster, and more secure ways to manage highly regulated, high-impact work.

But here’s the truth: not all solutions are created equal.

Some new entrants are offering early-stage beta tools. Others are repackaging general-purpose AI under the banner of “nuclear transformation.” What many still lack is what we’ve spent the last four years building at Nuclearn—deep operational understanding, embedded security architecture, and proven use cases deployed at scale.

Validation of the Mission

We’re not threatened by more players in the space. We welcome them. Every new entrant, every investor conversation, every “nuclear AI” LinkedIn post is validating what we’ve already proven: AI is no longer optional in this industry—it’s essential.

Since 2021, we’ve been supporting real-world operations across 48+ nuclear and utility sites in the U.S., Canada, and the U.K. We’ve worked inside secure environments, with live operational data, building tools that move the needle on efficiency, accuracy, and safety.

In other words, we’re not experimenting. We’re executing.

How Nuclearn Sets the Standard

Nuclearn wasn’t adapted for nuclear—it was built for it. Our team of nuclear engineers, planners, and outage veterans knew that generic AI couldn’t meet regulatory, compliance, or cultural requirements. So we designed a platform that could.

Here’s what differentiates Nuclearn in an increasingly noisy space:

- Field-Proven Deployment: Our tools are actively in use at commercial nuclear sites—not in simulation, not in “pilot purgatory.”

- Part 810 Compliant: Our system architecture was designed with export control, cyber resilience, and data sovereignty in mind from day one.

- On-Prem & GovCloud Options: We know what IT, security, and operations teams need—and we offer deployment flexibility to match.

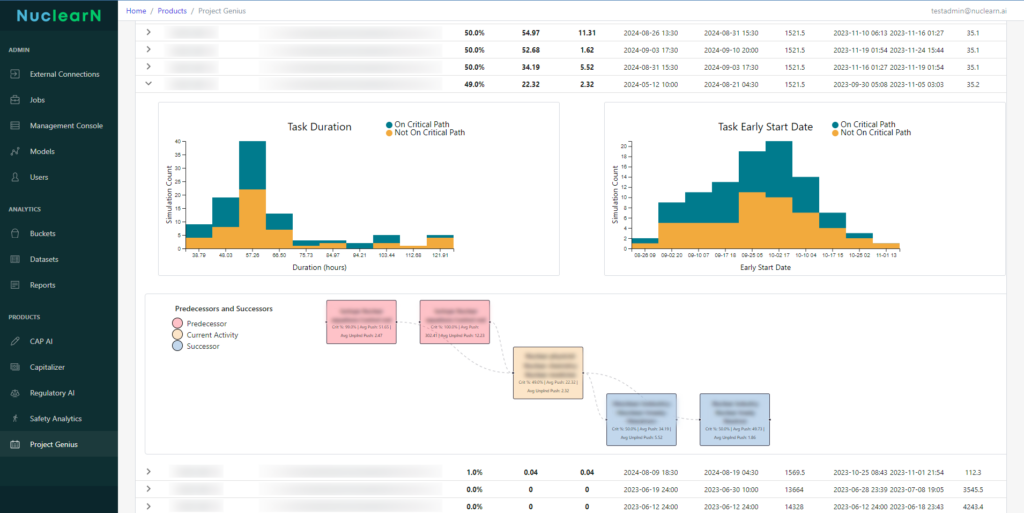

- Designed for Real Workflows: Procedure updates, FSAR crosswalks, outage readiness, tagging validation, safety documentation—these aren’t buzzwords. They’re everyday challenges we solve.

While others are still preparing for the work, Nuclearn is already helping teams:

- Cut hours from outage document prep

- Reduce the review burden on procedure writers

- Accelerate tagging accuracy during planning

- Analyze safety observations and generate reports in real-time

- Our platform doesn’t just talk about nuclear. It speaks fluently.

Why Competition Matters

Yes, we’re leading this category—but we don’t want to be alone in it. Innovation benefits from pressure and perspective. When more companies try to build for this space, we all learn what works, what doesn’t, and what’s required to earn trust in high-stakes environments.

Healthy competition pushes everyone to do better—for customers, for industry standards, and the future of nuclear.

But let’s be clear: this isn’t an industry that has time for AI that “might” work. This is a mission-critical environment. There is no room for hallucinated citations, opaque black boxes, or half-secure integrations.

So while we’re glad the space is growing, we’ll continue focusing on the things that matter most:

Security. Compliance. Trust. And results.

Customers Aren’t Looking for Options—They’re Looking for Outcomes

What we’re hearing in the field is that buyers aren’t overwhelmed—they’re skeptical. Leaders at plants, utilities, and national labs are asking:

- Is it secure?

- Is it proven?

- Does it integrate with our workflow?

- Can we deploy it without adding risk?

This is where Nuclearn continues to stand apart. Because our answers are:

✅ Yes.

✅ Yes.

✅ Yes.

✅ And yes.

We’ve never been interested in tech for tech’s sake. We’re here to build solutions that reduce friction, reclaim hours, and elevate the work of nuclear professionals.

The Bar Is High—And That’s a Good Thing

We’ve helped raise expectations. And we’re proud of that. Because when we hold ourselves—and our peers—to a higher standard, the entire industry benefits.

We want a world where:

- AI-powered documentation becomes the norm, not the exception

- Safety data is reviewed with contextual intelligence

- Engineers are free to engineer, not just fill out forms

That’s not science fiction. That’s what our users are doing with Nuclearn—right now.

Final Word

The rise of new entrants into the nuclear and utility AI space is exciting. It means this sector is finally getting the innovation attention it deserves.

But we’re not racing to catch up. We’re defining the pace.

We’re already supporting operations, delivering value, and earning the trust of nuclear’s most security-conscious customers.

We’re not the future of nuclear AI.

We’re its present.